The goal of this tutorial is to have a containerized application built, tested, and deployed on a web server using Docker and GitLab.

This project follows the ideas of this post: Continuous Integration and Deployment with Gitlab, Docker-compose, and DigitalOcean, and the course Authentication with Flask, React, and Docker.

The project GitLab repository is here. Note that only source code files are stored there. The project does not have CI / CD enabled. To follow the tutorial, create your own GitLab project and experiment with CI /CD there.

Table of Contents

Web Application

The application comprises two services: a Flask REST API backend and a React Frontend.

REST API Backend

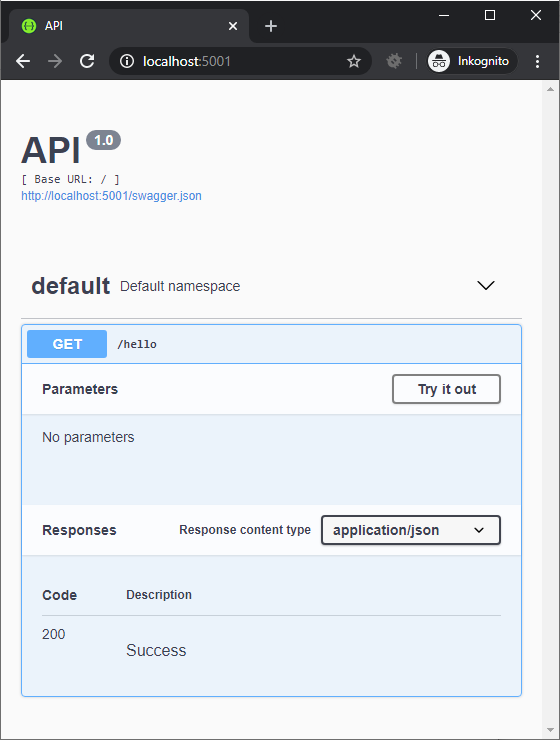

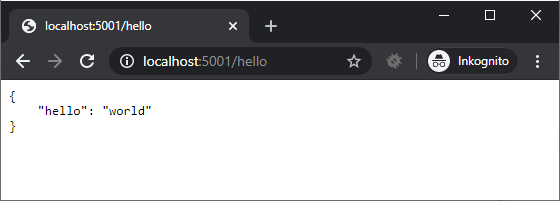

The backend is a simple REST API app built using Flask and Flask-RESTX. Since the focus of this project is the CI/CD, we only need a single REST endpoint to prove the concept:

from flask import Flask

from flask_restx import Resource, Api

from flask_cors import CORS, cross_origin

app = Flask(__name__)

api = Api(app)

cors = CORS(app, resources={r"/*": {"origins": "*"}})

@api.route('/hello')

class HelloWorld(Resource):

def get(self):

return {'hello': 'world'}

if __name__ == '__main__':

app.run(host='0.0.0.0', port=80)

In the module, we also use flask_cors extension to allow the incoming requests from the client app.

We also need a simple test for the test stage of the CI:

def test_hello():

assert True

The production setup of the application will require one more file that gunicorn will need to run the application:

from api import app

if __name__ == "__main__":

app.run()

Take a look into the requirements.txt file where we will list the dependencies required for the app to run:

aniso8601==8.0.0

atomicwrites==1.4.0

attrs==19.3.0

click==7.1.2

colorama==0.4.3

Flask==1.1.2

Flask-Cors==3.0.8

flask-restx==0.2.0

gunicorn==20.0.4

itsdangerous==1.1.0

Jinja2==2.11.2

jsonschema==3.2.0

MarkupSafe==1.1.1

more-itertools==8.2.0

packaging==20.3

pluggy==0.13.1

py==1.8.1

pyparsing==2.4.7

pyrsistent==0.16.0

pytest==5.4.2

pytz==2020.1

six==1.14.0

wcwidth==0.1.9

Werkzeug==1.0.1

Containerizing the REST API App

We will use three Dockerfiles, one for the local development, one to build and test the app in the CI, and one for the release version.

First, let’s create a .dockerignore file to tell Docker what directories and files should not be copied into images:

venv/

.pytest_cache/

.idea/

The development Dockerfile is quite simple:

# pull official base image

FROM python:3.8.2-alpine3.11

WORKDIR /usr/src/app

COPY requirements.txt ./

RUN pip install -r requirements.txt

COPY . .

EXPOSE 80

CMD [ "python", "./api.py" ]

It pulls a base image, sets up the app’s working directory, installs the app’s dependencies, copies the app code, and starts the development server.

We will test the development setup in a moment with docker-compose, but first, let’s prepare the client application.

Client Web App

The client is a React application that issues a single call to the backend REST API. In our project, we place it under the client/ folder.

The dependencies, settings, and scripts are defined in the package.json file:

{

"name": "client",

"version": "1.0.0",

"description": "",

"main": "index.js",

"scripts": {

"start": "react-scripts start",

"build": "react-scripts build",

"test": "react-scripts test"

},

"author": "",

"license": "ISC",

"dependencies": {

"axios": "^0.19.2",

"react": "^16.13.1",

"react-dom": "^16.13.1",

"react-scripts": "3.4.1"

},

"browserslist": {

"production": [

">0.2%",

"not dead",

"not op_mini all"

],

"development": [

"last 1 chrome version",

"last 1 firefox version",

"last 1 safari version"

]

},

"devDependencies": {

"@testing-library/react": "^10.0.4"

}

}

The application’s entry point is the index.js file under the src/ directory:

import React from "react";

import ReactDOM from "react-dom";

import App from "./App";

ReactDOM.render(

<App/>,

document.getElementById("root")

);

It renders the App component that we define like this:

import React, {Component} from "react";

import axios from 'axios';

const apiUrl = process.env.NODE_ENV === 'production' ? process.env.REACT_APP_BACKEND_SERVICE_URL : 'http://localhost:5001';

class App extends Component {

state = {

message: 'start'

}

componentDidMount() {

this.getMessage();

}

getMessage = () => {

axios

.get(`${apiUrl}/hello`)

.then(res => {

this.setState({message: `hello ${res.data.hello}`});

})

.catch(err => {

console.log(err);

});

}

render() {

return (

<div>

<h1>Sample App V. 2</h1>

<p>Message: {this.state.message}</p>

</div>

);

}

}

export default App;

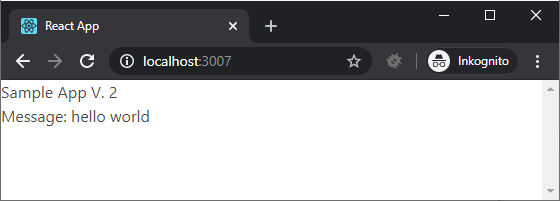

This component renders some simple HTML and a message that it loads using axios from the backend app. The API URL defaults to http://localhost:5001, or is taken from the environment variable REACT_APP_BACKEND_SERVICE_URL. The call is made in the componentDidMount lifecycle function.

For the test stage we define a simple test using @testing-library/react

import React from "react";

import { render, cleanup } from "@testing-library/react";

import App from "../App";

afterEach(cleanup);

it("renders", () => {

const { asFragment } = render(<App />);

expect(asFragment()).toMatchSnapshot();

});

/client/src/__tests__/App.test.jsx

To build the client app, we will also need a public folder that is generated by create-react-app:

├── client

│ ├── public

│ │ ├── favicon.ico

│ │ ├── index.html

│ │ ├── logo192.png

│ │ ├── logo512.png

│ │ ├── manifest.json

│ │ └── robots.txt

Containerizing the Client App

First, we define the file excluded from copying for Docker:

build/

node_modules/

.idea/

The development Dockerfile will have this content:

# pull official base image

FROM node:13.10.1-alpine

# set working directory

WORKDIR /usr/src/app

# add `/usr/src/app/node_modules/.bin` to $PATH

ENV PATH /usr/src/app/node_modules/.bin:$PATH

# install and cache app dependencies

COPY package.json /usr/src/app/package.json

COPY package-lock.json /usr/src/app/package-lock.json

RUN npm ci

RUN npm install react-scripts@3.4.0 -g --silent

# add app

COPY . /usr/src/app

# start app

CMD ["npm", "start"]

Here, we pull the base image, set up the working directory, add the .bin/ folder to the PATH in the image. Then, we install the dependencies, copy the app files, and start the development server.

Running the Development Containers

To run the development environment, we prepare the docker-compose.yml file in the project folder (i.e. in the same directory where backend/ and client/ folders are located).

version: '3.7'

services:

backend:

build:

context: ./backend

dockerfile: Dockerfile

volumes:

- ./backend:/usr/src/app

ports:

- 5001:80

environment:

- FLASK_ENV=development

client:

build:

context: ./client

dockerfile: Dockerfile

stdin_open: true

volumes:

- ./client:/usr/src/app

- /usr/src/app/node_modules

env_file: .env

ports:

- 3007:3000

environment:

- NODE_ENV=development

- REACT_APP_BACKEND_SERVICE_URL=${REACT_APP_BACKEND_SERVICE_URL}

depends_on:

- backend

We define two services: backend and client. We bind the ./backend folder as a volume to the backend container and map the host system’s port 5001 to port 80 of the container. Also, the FLASK_ENV is set to development

The client service binds the ./client/ folder as a volume. To avoid overwriting the client’s node_modules directory when the ./client/ folder is mounted, we also bind an anonymous volume /usr/src/app/node_modules.

To avoid exiting of the container after the development server is started, we also specify stdin_open: true.

The app running in this container will be accessible from the host machine via the mapped port 8007.

The REACT_APP_BACKEND_SERVICE_URL variable that is used by the app will receive its value from the environment. We can set its value in the .env file

REACT_APP_BACKEND_SERVICE_URL=http://localhost:5001

Note that the.env file is excluded from the repository and you will have to create it manually.

We start both containers by running:

docker-compose up --build -d

Make sure that the containers are running:

$ docker container ls

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

0e4e7b0bd537 simple_cicd_client "docker-entrypoint.s…" About a minute ago Up About a minute 0.0.0.0:3007->3000/tcp simple_cicd_client_1

57ab7320f91e simple_cicd_backend "python ./api.py" About a minute ago Up About a minute 0.0.0.0:5001->80/tcp simple_cicd_backend_1

Check the app in the browser:

CI CD with GitLab

We are going to use GitLab to set up a pipeline that will build test and deploy the web app to a remote server.

We create the .gitlab-ci.yml and add this to the beginning of the file:

image: docker:stable

stages:

- build

- test

- release

- deploy

variables:

IMAGE: ${CI_REGISTRY}/${CI_PROJECT_NAMESPACE}/${CI_PROJECT_NAME}

Build Stage: Backend

In this stage, we build intermediate images for the backend and client service that we will use to run tests. The stage is defined like this:

build:backend:

stage: build

services:

- docker:dind

variables:

DOCKER_DRIVER: overlay2

script:

- docker login -u $CI_REGISTRY_USER -p $CI_JOB_TOKEN $CI_REGISTRY

- docker pull $IMAGE:backend || true

- docker build

--cache-from $IMAGE:backend

--tag $IMAGE:backend

--file ./backend/Dockerfile.ci

--build-arg SECRET_KEY=$SECRET_KEY

"./backend"

- docker push $IMAGE:backend

/.gitlab-ci.yml

For the backend service, we use the docker-in-docker image to run scripts there that pull the backend image (tagged :backend) and build it using the ./backend/Dockerfile.ci file. In the script command, we also pass a variable SECRET_KEY that can be used in the dockerfile. The newly built image is tagged again :backend and pushed back to the GitLab project’s registry.

The Dockerfile.ci for the backend service has this content:

###########

# BUILDER #

###########

# pull official base image

FROM python:3.8.2-alpine3.11 as builder

# set work directory

WORKDIR /usr/src/app

# set environment varibles

ENV PYTHONDONTWRITEBYTECODE 1

ENV PYTHONUNBUFFERED 1

# install dependencies

RUN pip install --upgrade pip

COPY ./requirements.txt .

RUN pip wheel --no-cache-dir --no-deps --wheel-dir /usr/src/app/wheels -r requirements.txt

#########

# FINAL #

#########

# pull official base image

FROM python:3.8.2-alpine3.11

# set work directory

WORKDIR /usr/src/app

# set environment variables

ENV PYTHONDONTWRITEBYTECODE 1

ENV PYTHONUNBUFFERED 1

ENV FLASK_ENV production

# install dependencies

COPY --from=builder /usr/src/app/wheels /wheels

COPY --from=builder /usr/src/app/requirements.txt .

RUN pip install --upgrade pip

RUN pip install --no-cache /wheels/*

# add app

COPY . /usr/src/app

# add and run as non-root user

RUN adduser -D myuser

USER myuser

# run gunicorn

CMD gunicorn --bind 0.0.0.0:$PORT wsgi:app

In this dockerfile, we use a multi-stage build to reduce the resulting image size. The builder image installs the requirements and archives them as wheels using pip wheel command.

The final image copies the wheels and installs dependencies from them without downloading and recompiling packages. In this image, we also use a non-root account to run the production-ready gunicorn app server.

The total size of the final image is then smaller due to the use of wheels.

Build Stage: Client

The build stage for the client image is defined like this:

build:client:

stage: build

services:

- docker:dind

variables:

DOCKER_DRIVER: overlay2

REACT_APP_BACKEND_SERVICE_URL: $REACT_APP_BACKEND_SERVICE_URL

script:

- docker login -u $CI_REGISTRY_USER -p $CI_JOB_TOKEN $CI_REGISTRY

- docker pull $IMAGE:client || true

- docker build

--cache-from $IMAGE:client

--tag $IMAGE:client

--file ./client/Dockerfile.ci

"./client"

- docker push $IMAGE:client

Here too, we use a container based on the docker-in-docker image to build the client image and push the result back to the project registry. Note that we are setting the environment variable REACT_APP_BACKEND_SERVICE_URL before running the build script. Its value is taken from the GitLab project’s settings, CI/CD variable section.

The file /client/Dockerfile.ci used in this stage has this content:

FROM node:13.10.1-alpine

# set working directory

WORKDIR /usr/src/app

# add `/usr/src/app/node_modules/.bin` to $PATH

ENV PATH /usr/src/app/node_modules/.bin:$PATH

ENV NODE_ENV development

# install and cache app dependencies

COPY package.json /usr/src/app/package.json

COPY package-lock.json /usr/src/app/package-lock.json

RUN npm ci

RUN npm install react-scripts@3.4.0 -g --silent

# add app

COPY . /usr/src/app

# start app

CMD ["npm", "start"]

The simplicity of the current project allows us to use basically the same Dockerfile as for the development image.

Test Stage

The test stage jobs are run on the images built in the build stage. The definition of the test stage looks like this:

test:backend:

stage: test

image: $IMAGE:backend

variables:

FLASK_ENV: development

script:

- cd /usr/src/app

- python -m pytest tests -p no:warnings

test:client:

stage: test

image: $IMAGE:client

script:

- cd /usr/src/app

- npm run test

Production Stage

In the production stage, we build the final release image(s) that will be deployed to the production server.

This project allows the services to be run from the same container. We will build the client service image first (tagged :build-react), then build the backend image (tagged :production), copy the built React application from the client image (these are just static files) to the predefined directory in the backend image. In the backend image, we set up Nginx to serve static files or to reverse proxy to the gunicorn running the Flask REST API backend.

The Production stage is defined like this:

build:production:

stage: release

services:

- docker:dind

variables:

DOCKER_DRIVER: overlay2

script:

- apk add --no-cache curl

- docker login -u $CI_REGISTRY_USER -p $CI_JOB_TOKEN $CI_REGISTRY

- docker pull $IMAGE:build-react || true

- docker pull $IMAGE:production || true

- docker build

--target build-react

--cache-from $IMAGE:build-react

--tag $IMAGE:build-react

--file ./Dockerfile.deploy

"."

- docker build

--cache-from $IMAGE:production

--tag $IMAGE:production

--file ./Dockerfile.deploy

"."

- docker push $IMAGE:build-react

- docker push $IMAGE:production

The GitLab configuration uses a special Dockerfile.deploy file that has this content:

###############

# BUILD-REACT #

###############

FROM node:13.10.1-alpine as build-react

WORKDIR /app

ENV PATH /app/node_modules/.bin:$PATH

ENV NODE_ENV production

ENV REACT_APP_BACKEND_SERVICE_URL $REACT_APP_BACKEND_SERVICE_URL

COPY ./client/package*.json ./

RUN npm install

RUN npm install react-scripts@3.4.0

COPY ./client/ .

RUN npm run build

######################

# PRODUCTION-BUILDER #

######################

FROM nginx:1.17-alpine as production-builder

ENV PYTHONDONTWRITEBYTECODE 1

ENV PYTHONUNBUFFERED 1

ENV FLASK_ENV=production

WORKDIR /app

RUN apk update && \

apk add --no-cache --virtual build-deps \

openssl-dev libffi-dev gcc python3-dev musl-dev \

netcat-openbsd

RUN python3 -m ensurepip && \

rm -r /usr/lib/python*/ensurepip && \

pip3 install --upgrade pip setuptools && \

if [ ! -e /usr/bin/pip ]; then ln -s pip3 /usr/bin/pip ; fi && \

if [[ ! -e /usr/bin/python ]]; then ln -sf /usr/bin/python3 /usr/bin/python; fi && \

rm -r /root/.cache

COPY ./backend/requirements.txt ./

RUN pip install wheel

RUN pip install -r requirements.txt

RUN pip wheel --no-cache-dir --no-deps --wheel-dir /app/wheels -r requirements.txt

##############

# PRODUCTION #

##############

FROM nginx:1.17-alpine as production

ENV PYTHONDONTWRITEBYTECODE 1

ENV PYTHONUNBUFFERED 1

ENV FLASK_ENV=production

WORKDIR /app

RUN apk update && \

apk add --no-cache --virtual build-deps \

openssl-dev libffi-dev gcc python3-dev musl-dev \

netcat-openbsd

RUN python3 -m ensurepip && \

rm -r /usr/lib/python*/ensurepip && \

pip3 install --upgrade pip setuptools && \

if [ ! -e /usr/bin/pip ]; then ln -s pip3 /usr/bin/pip ; fi && \

if [[ ! -e /usr/bin/python ]]; then ln -sf /usr/bin/python3 /usr/bin/python; fi && \

rm -r /root/.cache

COPY --from=production-builder /app/wheels /wheels

COPY --from=production-builder /app/requirements.txt ./

RUN pip install wheel

RUN pip install --no-cache /wheels/*

## add permissions

RUN chown -R nginx:nginx /app && chmod -R 755 /app && \

chown -R nginx:nginx /var/cache/nginx && \

chown -R nginx:nginx /var/log/nginx && \

chown -R nginx:nginx /etc/nginx/conf.d

RUN touch /var/run/nginx.pid && \

chown -R nginx:nginx /var/run/nginx.pid

RUN touch /var/log/error.log && \

chown -R nginx:nginx /var/log/error.log

COPY ./nginx/nginx.conf /etc/nginx/nginx.conf

COPY ./nginx/default.conf /etc/nginx/conf.d/default.conf

## switch to non-root user

USER nginx

COPY --from=build-react /app/build /usr/share/nginx/html

COPY ./backend .

## run server

CMD gunicorn -b 0.0.0.0:5000 wsgi:app --daemon && \

sed -i -e 's/$PORT/'"$PORT"'/g' /etc/nginx/conf.d/default.conf && \

nginx -g 'daemon off;'

In the first part, we build the build-react image. The difference here is that in the end, we do not start the development server. Instead, we run the npm run build command that compiles the static files of the React app.

The second part creates an intermediate image production-builder that installs requirements for the Flask app and prepares wheels for the production image.

In the third part, we use an nginx image as the base. We install Python dependencies from wheels built by production-builder. We also copy the React static files from the build-react image and the Nginx configuration.

We run the gunicorn server, modify the Nginx config to use the port number passed from the environment variables, and, finally, start the Nginx server.

The Nginx configuration serves the static files of the React app and forwards the request to the API endpoint to ‘/hello’ to the gunicorn server running at port 5000.

server {

listen $PORT;

root /usr/share/nginx/html;

index index.html index.html;

location / {

try_files $uri /index.html =404;

}

location /hello {

proxy_pass http://127.0.0.1:5000;

proxy_http_version 1.1;

proxy_redirect default;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Host $server_name;

}

}

The release image is tagged :production and is ready to deploy.

We also will run the production container using the non-root user nginx. More details on how to set it up are here: Run Docker nginx as Non-Root-User. For this purpose, the release stage will override the default file nginx.conf with the custom version, we have in /nginx/nginx.conf:

worker_processes 1;

error_log /var/log/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}

The release image is tagged :production and is ready to deploy.

We also will run the production container using the non-root user nginx. More details on how to set it up are here: Run Docker nginx as Non-Root-User

Deploy Stage

The definition of the deploy stage is this:

deploy:

stage: deploy

image: gitlab/dind:latest

only:

- "master"

services:

- docker:dind

before_script:

- mkdir -p ~/.ssh

- echo "$DEPLOY_SERVER_PRIVATE_KEY" | tr -d '\r' > ~/.ssh/id_rsa

- chmod 600 ~/.ssh/id_rsa

- eval "$(ssh-agent -s)"

- ssh-add ~/.ssh/id_rsa

- ssh-keyscan -H $DEPLOYMENT_SERVER_IP >> ~/.ssh/known_hosts

script:

- printf "FLASK_ENV=production\nPORT=8765\nREACT_APP_BACKEND_SERVICE_URL=/\n" > environment.env

- - ssh $DEPLOYMENT_USER@$DEPLOYMENT_SERVER_IP "mkdir -p ~/${CI_REGISTRY}/${CI_PROJECT_PATH}"

- scp -r ./environment.env ./docker-compose.deploy.yml ${DEPLOYMENT_USER}@${DEPLOYMENT_SERVER_IP}:~/${CI_REGISTRY}/${CI_PROJECT_PATH}/

- ssh $DEPLOYMENT_USER@$DEPLOYMENT_SERVER_IP "cd ~/${CI_REGISTRY}/${CI_PROJECT_PATH}; sudo docker login -u ${CI_REGISTRY_USER} -p ${CI_REGISTRY_PASSWORD} ${CI_REGISTRY}; sudo docker pull ${CI_REGISTRY}/${CI_PROJECT_PATH}:production; sudo docker-compose -f docker-compose.deploy.yml stop; sudo docker-compose -f docker-compose.deploy.yml rm -f web; sudo docker-compose -f docker-compose.deploy.yml up -d"

In this stage, GitLab spins a temporary container that connects to the remote server via SSH and runs a set of commands that:

- creates a file

environment.envand copies environment variables from the project variables. the value for the variableREACT_APP_BACKEND_SERVICE_URLis set to “/” (since the REST API run on the same host and port); - copies the

environment.envanddocker-compose.deploy.ymlfile to the remote server to a specified folder; - docker login into the project’s Gitlab registry;

- pulls the newer version of the image

- stops and removes the running containers

- starts the containers from the updated image

These operations require that the remote server has both docker and docker-compose installed. We will also need a key pair that will allow Gitlab to log in to the remote server.

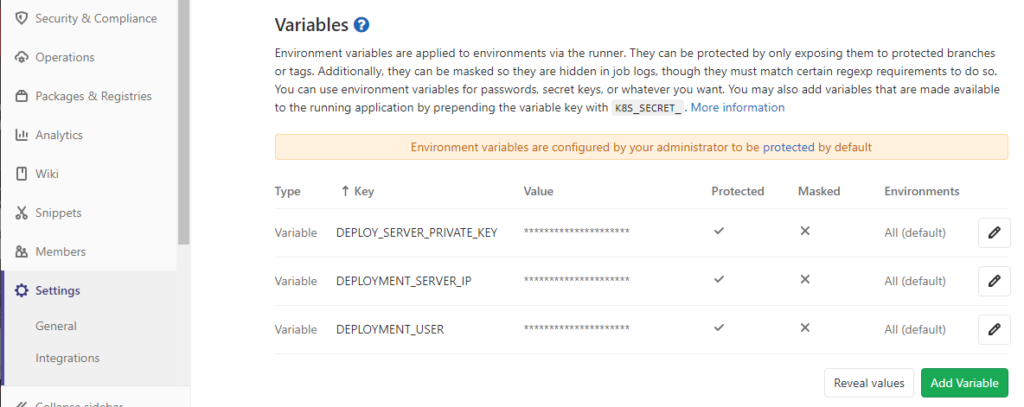

We will need three project variables for the project:

DEPLOYMENT_SERVER_IP– address of the remote serverDEPLOYMENT_USER– user name used to login to the remote serverDEPLOY_SERVER_PRIVATE_KEY– private key for the user on the remote server

Go to “Settings” > “CI /CD” and open the section “Variables”

Preparing the Remote Server

The remote server will have to allow the deployment to use passwordless sudo execution of docker and docker-compose commands. This is done by creating a file {$username} under /etc/.sudoers/, e.g. for the user developer (/etc/sudoers.d/developer)

-rw-r--r-- 1 root root 78 May 15 17:34 developer

Content:

developer ALL=(root) NOPASSWD: /usr/bin/docker, /usr/local/bin/docker-compose

The remote server will use docker-compose.deploy.yml to start the production container:

version: '3.7'

services:

web:

image: registry.gitlab.com/varinen/simple-ci-cd:production

ports:

- 8008:8765

env_file:

- environment.env

Here, the process is simple: a single service, host port 8008 mapped to the container’s port 8765, environment variables read from environment.env

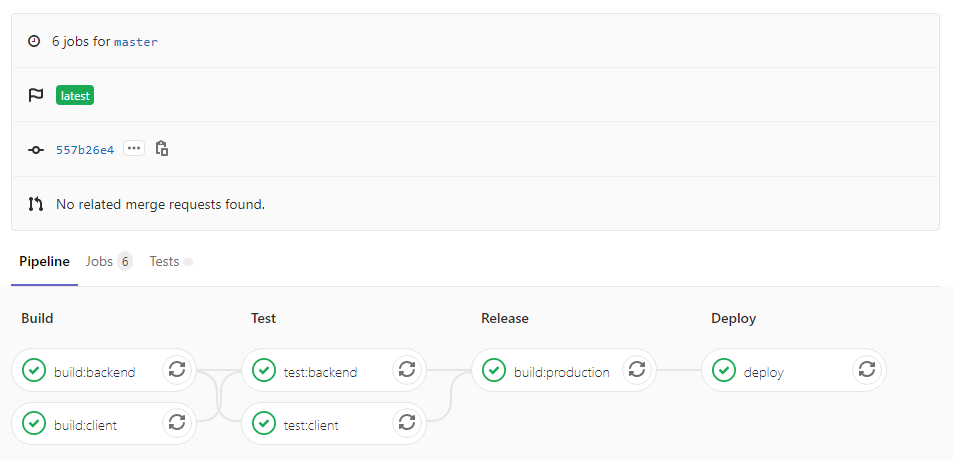

Commit the project to a repository whose origin is set to gitlab, and push the master branch upstream. This will trigger a pipeline. If everything goes right, the pipeline will look like this:

Now go to your browser and type the hostname or the IP address of your server followed by port 8008, like http://myhost.io:8008. the response should be the same as we see when testing the development server.

Possible Problems

On the remote server, there may be a warning about permission being denied on the ~/.docker/config.json file. Make sure that the user has sufficient permission on the .docker directory:

sudo chown "$USER":"$USER" /home/"$USER"/.docker -R

sudo chmod g+rwx "/home/$USER/.docker" -R

0 Comments